NvInferAttributeModel

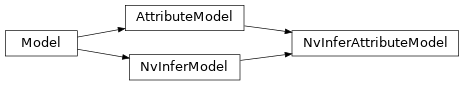

NvInferAttributeModel inheritance diagram

- class savant.deepstream.nvinfer.model.NvInferAttributeModel(local_path=None, remote=None, model_file=None, batch_size=1, precision=ModelPrecision.FP16, input=NvInferModelInput(object='auto.frame', layer_name=None, shape=None, maintain_aspect_ratio=False, symmetric_padding=False, scale_factor=1.0, offsets=(0.0, 0.0, 0.0), color_format=<ModelColorFormat.RGB: 0>, preprocess_object_meta=None, preprocess_object_image=None, object_min_width=None, object_min_height=None, object_max_width=None, object_max_height=None), output=AttributeModelOutput(layer_names=[], converter=None, attributes='???'), format=None, config_file=None, int8_calib_file=None, engine_file=None, proto_file=None, custom_config_file=None, mean_file=None, label_file=None, tlt_model_key=None, gpu_id=0, interval=0, workspace_size=6144, custom_lib_path=None, engine_create_func_name=None, layer_device_precision=<factory>, enable_dla=None, use_dla_core=None, scaling_compute_hw=None, scaling_filter=None, parse_classifier_func_name=None)

NvInferAttribute model configuration template.

Use to configure classifiers, etc.

Example

- element: nvinfer@classifier name: Secondary_CarColor model: format: caffe model_file: resnet18.caffemodel mean_file: mean.ppm label_file: labels.txt precision: int8 int8_calib_file: cal_trt.bin batch_size: 16 input: object: Primary_Detector.Car object_min_width: 64 object_min_height: 64 color_format: bgr output: layer_names: [predictions/Softmax] attributes: - name: car_color threshold: 0.51

- batch_size: int = 1

Number of frames or objects to be inferred together in a batch.

Note

In case the model is an NvInferModel and it is configured to use the TRT engine file directly, the default value for

batch_sizewill be taken from the engine file name, by parsing it according to the scheme {model_name}_b{batch_size}_gpu{gpu_id}_{precision}.engine

- custom_config_file: str | None = None

Configuration file for custom model, eg for YOLO. By default, the model file name (

model_file) will be used with the extension.cfg.

- custom_lib_path: str | None = None

Absolute pathname of a library containing custom method implementations for custom models.

- engine_create_func_name: str | None = None

Name of the custom TensorRT CudaEngine creation function.

- format: NvInferModelFormat | None = None

Model file format.

Example

format: onnx # format: caffe # etc. # look in enum for full list of format options

- gpu_id: int = 0

Device ID of GPU to use for pre-processing/inference (dGPU only).

Note

In case the model is configured to use the TRT engine file directly, the default value for

gpu_idwill be taken from theengine_file, by parsing it according to the scheme {model_name}_b{batch_size}_gpu{gpu_id}_{precision}.engine

- input: NvInferModelInput = NvInferModelInput(object='auto.frame', layer_name=None, shape=None, maintain_aspect_ratio=False, symmetric_padding=False, scale_factor=1.0, offsets=(0.0, 0.0, 0.0), color_format=<ModelColorFormat.RGB: 0>, preprocess_object_meta=None, preprocess_object_image=None, object_min_width=None, object_min_height=None, object_max_width=None, object_max_height=None)

Optional configuration of input data and custom preprocessing methods for a model. If not set, then input will default to entire frame.

- int8_calib_file: str | None = None

INT8 calibration file for dynamic range adjustment with an FP32 model. Required only for models in INT8.

- local_path: str | None = None

Path where all the necessary model files are placed. By default, the value of module parameter “model_path” and element name will be used (“model_path / element_name”).

- model_file: str | None = None

The model file, eg yolov4.onnx.

Note

The model file is specified without a location. The absolute path to the model file will be defined as “

local_path/model_file”.

- output: AttributeModelOutput = AttributeModelOutput(layer_names=[], converter=None, attributes='???')

Configuration for post-processing of an attribute model’s results.

- precision: ModelPrecision = 2

Data format to be used by inference.

Example

precision: fp16 # precision: int8 # precision: fp32

Note

In case the model is an NvInferModel and it is configured to use the TRT engine file directly, the default value for

precisionwill be taken from the engine file name, by parsing it according to the scheme {model_name}_b{batch_size}_gpu{gpu_id}_{precision}.engine

- proto_file: str | None = None

Caffe model prototxt file. By default, the model file name (

model_file) will be used with the extension.prototxt.

- remote: RemoteFile | None = None

Configuration of model files remote location. Supported schemes: s3, http, https, ftp.

- scaling_compute_hw: NvInferScalingComputeHW | None = None

Specifies the hardware to be used for scaling compute.